Difference between revisions of "Personalized Problems"

Pinventado (talk | contribs) (Updated infobox links and added shepherding as evidence) |

Pinventado (talk | contribs) (Add design pattern) |

||

| Line 69: | Line 69: | ||

<references/> | <references/> | ||

[[Category:Design_patterns]] [[Category:ASSISTments]] | |||

Revision as of 10:02, 8 July 2015

| Personalized Problems | |

| Contributors | |

|---|---|

| Last modification | July 8, 2015 |

| Source | {{{source}}} |

| Pattern formats | OPR Alexandrian |

| Usability | |

| Learning domain | General |

| Stakeholders | Teachers, Students, System developers |

| Production | |

| Data analysis | Student affect and interaction behavior in ASSISTments |

| Confidence | |

| Evaluation | PLoP 2015 writing workshop, Talk:ASSISTments |

| Application | ASSISTments |

| Applied evaluation | ASSISTments |

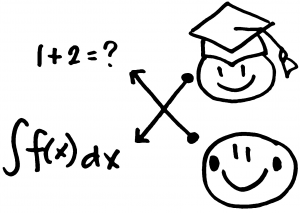

If students become bored or disengage from the exercise when they solve problems that are either too easy or too difficult for them to solve, then assign to students problems that they have the ability to solve.

Context

Students are asked to answer an exercise in an online learning system. Teachers design and encode problems in an online learning system with corresponding answers and feedback. Teachers decide on the difficulty of problems in the exercise according to a general assessment of student ability in their class.

Problem

Students become bored or disengage from the exercise when they solve problems that are either too easy or too difficult for them to solve.

Forces

- Prior knowledge. Students may find it impossible to solve a problem when they have not acquired the necessary skills to solve it[1].

- Expertise reversal. Presenting students information they already know can impose extraneous cognitive load and interfere with additional learning[1].

- Risk taking. Students who are risk takers prefer challenging tasks, because they find satisfaction in maximizing their learning. However, students who are not risk takers often experience anxiety when they feel the difficulty of a learning task has exceeded their skill [2].

- Learning rate. Students learn at varying rates, which could be affected by their prior knowledge, learning experience, and the quality of instruction they receive[3].

- Limited resources. Student attention and patience is a limited resource possibly affected by pending deadlines, upcoming tests, achievement in previous learning experiences, personal interest, quality of instruction, achievement in previous learning experiences, personal interest, quality of instruction, and others[4][3].

Solution

Therefore, assign to students problems that they have the ability to solve.

A student’s capability to solve a problem can be identified using assessments of their knowledge on pre-requisite skills, or model-based predictors[5].

Consequences

Benefits

- Students have enough prior knowledge to solve a problem.

- Students do not need to “slow down” to adjust to the difficulty of the exercise.

- Risk takers will get challenging problems, while non-risk takers will not be overwhelmed by overly difficult problems.

- Students will solve problems appropriate for their skill level.

- Exercises that are neither too easy nor too challenging can motivate students to spend more time performing them.

Liabilities

- Content writers will need to provide content for students with different levels of ability

- If students’ skill level is incorrectly identified, the system can still give students problems that are too easy or too difficult.

Evidence

Literature

Research in different learning domains showed that personalizing content to students’ skill level had similar learning gains as non-personalized content, but took a shorter amount of time (e.g., simulated air traffic control[6], algebra[7], geometry[8], and health sciences[9].

Discussion

In a meeting with Ryan Baker and his team at Teacher's College in Columbia University, Neil Heffernan and his team at Worcester Polytechnic Institute, and Peter Scupelli and his team at the School of Design in Carnegie Mellon University (i.e., ASSISTments stakeholders), the team agreed that issues arise because of the lack of personalization and personalizing content to students' skill levels may help address the issue.

David West, the pattern's shepherd at PLoP 2015, also considered the pattern definition acceptable.

Data

According to an analysis of ASSISTments’ data, boredom and gaming behavior correlated with problem difficulty (i.e., evidenced by answer correctness and number of hint requests).

Related patterns

This pattern applies the concept of Different exercise levels[10] in online learning systems, and Content personalization[11] in exercise problem selection. It can be used with Just enough practice to help students master skills and select the next set of problems according to their learning progress. Worked examples can be used when students have lack enough skills to solve the problem.

Example

A teacher would encode into an online learning system a math exercise containing problems with varying difficulty. As students answer questions in their homework, the online learning system would keep track of students’ progress to identify their skill level such as low (i.e., student makes mistakes ≥ 60% of the time), medium (i.e., student makes mistakes < 60% and ≥ 40% of the time) or high (i.e., student makes mistakes < 40% of the time). Based on students’ performance, the online learning system would provide the corresponding question type so it is more likely for students to receive questions that are fit for their skill level.

References

- ↑ 1.0 1.1 Sweller, J. (2004). Instructional design consequences of an analogy between evolution by natural selection and human cognitive architecture. Instructional science, 32(1-2), 9-31.

- ↑ Meyer, D. K., and Turner, J. C. (2002). Discovering emotion in classroom motivation research. Educational psychologist, 37(2), 107-114.

- ↑ 3.0 3.1 Bloom, B. S. (1974). Time and learning. American psychologist, 29(9), 682.

- ↑ Arnold, A., Scheines, R., Beck, J. E., and Jerome, B. (2005). Time and attention: Students, sessions, and tasks. In Proceedings of the AAAI 2005 Workshop Educational Data Mining (pp. 62-66).

- ↑ Yudelson, M. V., Koedinger, K. R., and Gordon, G. J. (2013). Individualized bayesian knowledge tracing models. In Artificial Intelligence in Education (pp. 171-180). Springer Berlin Heidelberg.

- ↑ Salden, R. J., Paas, F., Broers, N. J., and Van Merriënboer, J. J. 2004. Mental effort and performance as determinants for the dynamic selection of learning tasks in air traffic control training. Instructional science, 32(1-2), 153-172.

- ↑ Cen, H., Koedinger, K. R., and Junker, B. 2007. Is Over Practice Necessary?-Improving Learning Efficiency with the Cognitive Tutor through Educational Data Mining. Frontiers in Artificial Intelligence and Applications, 158, 511.

- ↑ Salden, R.J.C.M., Aleven, V., Schwonke, R. and Renkl, A. (2010). The expertise reversal effect and worked examples in tutored problem solving. Instructional Sicience, 38, 289--307.

- ↑ Corbalan, G., Kester, L. and van Merrieonboer, J.J.G. (2008). Selecting learning tasks: Effects of adaptation and shared control on learning efficiency and task involvement. Contemporary Educational Psycholoy, 33, 733--756.

- ↑ Bergin, J., Eckstein, J., Völter, M., Sipos, M., Wallingford, E., Marquardt, K., Chandler, J., Sharp, H. and Manns, M. L. (2012). Pedagogical patterns: advice for educators. Joseph Bergin Software Tools.

- ↑ Danculovic, J., Rossi, G., Schwabe, D., and Miaton, L. (2001). Patterns for Personalized Web Applications. In Proceedings of the 6th European Conference on Pattern Languages of Programs, Universitaetsverlag Konstanz, Germany, 423--436.